If you ask most service leaders about AI, the problem isn’t a lack of ideas, it’s the opposite.

From GenAI copilots for technicians to predictive maintenance and automated triaging, the list of “potential” initiatives keeps growing. In many organizations, this backlog already exists, documented, discussed, and often partially explored.

Yet, very few of these initiatives move beyond the pilot stage. Even fewer scale. The issue isn’t awareness. It isn’t even capability.

The real challenge is deciding what to do first, and what not to do at all.

AI success in aftersales depends less on identifying use cases and more on the discipline of prioritization and sequencing. Without that, even well-intentioned programs quickly fragment into disconnected experiments.

Many organizations exploring AI in aftersales and service operations face the same challenge, too many use cases and no clear starting point. Whether it’s predictive maintenance, AI copilots, or intelligent scheduling, the real question is not what AI can do, but how to prioritize AI initiatives based on business impact and readiness.

The Noise vs Signal Problem

AI now sits at the center of most service transformation agendas. But without a clear filter, organizations tend to fall into a predictable pattern.

Multiple pilots are launched in parallel, each addressing a different use case. Data and IT teams are stretched across initiatives. Some projects stall midway when underlying data issues surface. Others fail to scale because they were never designed with integration in mind.

Individually, these challenges are manageable. Together, they create something more problematic, activity without outcomes.

This is the “noise vs signal” problem. Everything appears valuable. Everything feels urgent. But when everything is prioritized, nothing truly moves forward.

In aftersales, where margins are tight and uptime is critical, this kind of fragmentation is not just inefficient. It’s costly.

AI is a Portfolio, not a Project

Fragementation starts with how AI is approached.

Most organizations treat AI as a single transformation program, a large initiative expected to deliver across multiple dimensions simultaneously. In practice, AI behaves very differently.

It is not one initiative. It is a portfolio of decisions. Some of these initiatives deliver incremental efficiency, while others unlock entirely new value pools, especially when AI begins to orchestrate decisions across workflows, not just support them.

Treating all of these as part of one “AI program” creates confusion. It leads to inconsistent expectations and poor sequencing.

A more effective approach is to think in layers:

- Some initiatives generate quick efficiency gains

- Others strengthen the underlying data and systems

- A few act as long-term strategic differentiators

The objective is not to identify the single best use case.

Case Study: Strategic Patience in Practice

A global diversified manufacturer I worked with took a deliberately contrarian approach to AI.

While competitors were launching multiple GenAI pilots, they chose to hold back. Not due to lack of ambition, but because they recognized a fundamental gap: their data wasn’t ready.

Instead of chasing high-visibility AI initiatives, they focused on fixing the foundation:

- Cleaning and standardizing service data across business units

- Integrating fragmented ERP and service systems

- Establishing clear governance for data, IP, and AI usage

This wasn’t positioned as a delay. It was a sequencing decision.

Their view was simple: AI will only scale when the foundation is ready.

The result is a very different trajectory. When they move, they will scale across the enterprise, while others are still trying to operationalize isolated pilots.

It is to build a sequence where early initiatives create the foundation, and funding, for what comes next.

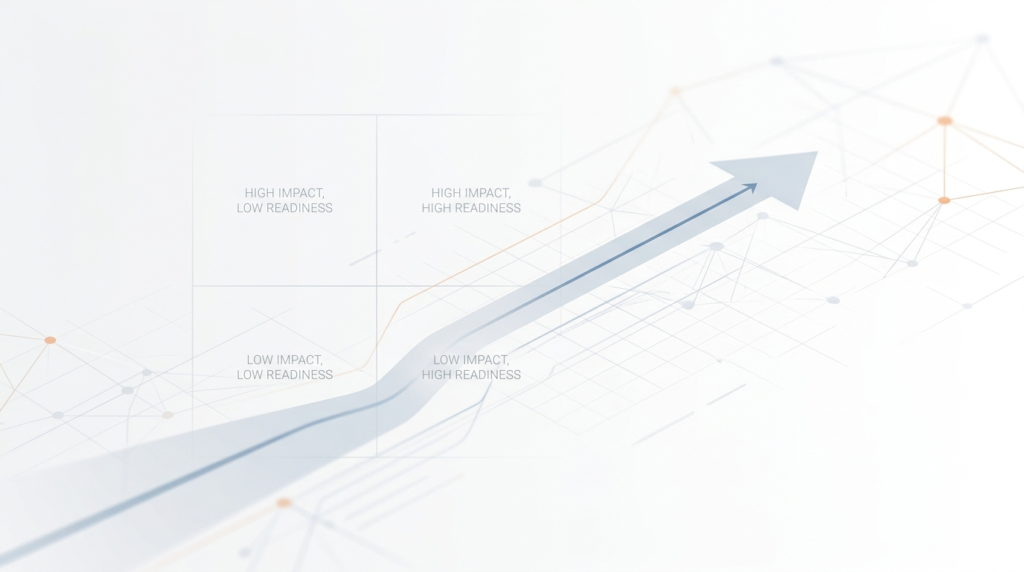

AI Prioritization Framework: Business Impact vs Data Readiness

Business impact is usually well understood. Leaders can assess whether an initiative improves key outcomes such as first-time fix rate, resolution time, or warranty costs.

Data readiness, however, is often overestimated. Data may exist, but it is frequently:

- Fragmented across systems

- Inconsistently structured

- Difficult to access in real time

This gap is where most AI initiatives fail. That gap explains why many high-impact ideas, especially predictive and autonomous use cases, fail to move beyond early stages.

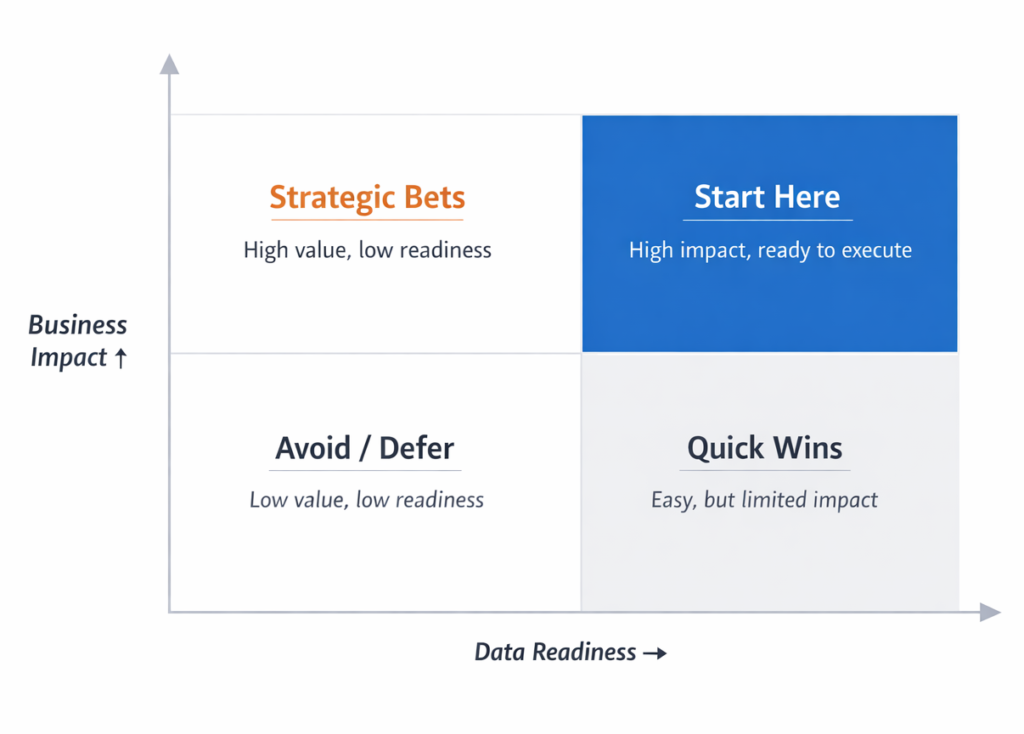

A practical way to bring discipline into AI decision-making is to evaluate initiatives across two dimensions: business impact and data readiness.

This helps separate ideas that are immediately actionable from those that require foundational work. More importantly, it prevents organizations from starting with high-value AI initiatives that they are not yet equipped to execute.

The matrix highlights an important pattern.

Most organizations are naturally drawn toward high-impact initiatives, even when data readiness is low. These often become “strategic bets” that stall due to missing foundations.

In contrast, initiatives in the top-right quadrant, where impact and readiness are both high, tend to deliver faster results and build momentum. These are the most effective starting points.

The bottom-right quadrant can be useful for early progress but focusing only on quick wins limits long-term value. The bottom-left quadrant, on the other hand, should be actively avoided.

Not sure where your initiatives land on this matrix?

Take the free AI readiness Assessment

The goal is not to pick the most ambitious idea, but to start with the highest-impact initiative your organization is ready to execute today.

High-value ideas with low data readiness are not starting points. They are strategic bets.

Every AI decision involves a trade-off, not just what to do, but when to do it. Starting too early can be as costly as waiting too long. Many of these trade-offs become clearer when you look at how AI actually gets executed in service environments, especially the gap between identifying use cases and delivering outcomes.

The most effective starting point lies where impact and readiness intersect.

Strategic Trade-off

Cost of Inaction vs Premature Action

| The Cost of Inaction (Waiting Too Long) | The Cost of Premature Action (Starting Too Early) |

| Market Irrelevance | Market Irrelevance |

| Talent Loss | Technical Debt |

| Knowledge Decay | Loss of Trust |

The Three Horizons of Aftersales AI

Prioritization answers the question of what to do. Sequencing answers when.

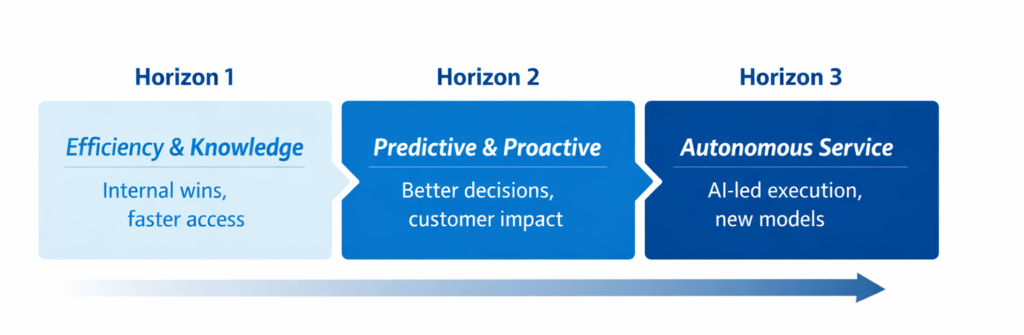

A more practical way to approach this is through three horizons.

- In the first horizon, the focus is on efficiency and knowledge access. These initiatives improve how technicians and agents interact with information, making it easier to retrieve manuals, summarize cases, or access historical insights. They are relatively low risk and deliver immediate operational value.

- The second horizon shifts toward prediction and proactive decision-making. Here, AI begins to influence outcomes, optimizing schedules, forecasting parts demand, or anticipating failures. These initiatives require stronger data foundations and better system integration.

- The third horizon represents a more fundamental shift. AI moves from supporting decisions to making them, enabling autonomous workflows and outcome-based service models. These initiatives are high impact but also highly dependent on maturity across data, processes, and governance.

A useful way to think about sequencing AI initiatives is through a three-horizon model. Instead of attempting advanced AI capabilities upfront, organizations can build progressively, from improving access to knowledge, to enabling predictive insights, and eventually moving toward more autonomous service operations.

The progression across these horizons is not just about technology maturity. It reflects improvements in data quality, system integration, and organizational trust. Most organizations struggle not because they lack ambition, but because they attempt to jump directly to advanced use cases without building the necessary foundation.

Many organizations are drawn to the second and third horizons. But without the groundwork laid in the first, progress is often slower than expected.

Horizon 1 is not optional. It is where both data quality and organizational trust are built.

Discover which horizon your aftersales org is ready for

The Kill-Switch: Knowing What Not to Do

In many cases, what appears to be an AI problem is not an AI problem at all. It is a data, process, or system design issue in disguise.

Organizations often default to AI when simpler interventions such as process standardization, improved data availability, or targeted automation, that can deliver faster and more reliable outcomes. Applying AI on top of fragmented workflows rarely creates value; it amplifies existing inefficiencies.

Not every problem requires AI, and forcing it often delays real outcomes.

One of the most important, but least discussed, capabilities in an AI roadmap is the ability to say no. Or at least, not yet. Clear decision criteria help prevent organizations from investing in initiatives that are unlikely to succeed in the current environment.

If the required data is inaccessible or unreliable, the initiative should be postponed. If the underlying process is inconsistent, it should be fixed before being automated. If there is no clear ownership from the business, the initiative is unlikely to scale. These decisions are not about reducing ambition. They are about maintaining focus.

Scenario: The Predictive Maintenance Trap

Consider an organization looking to deploy predictive maintenance across its installed base. On paper, the value is clear: reduced downtime, lower service costs, better customer outcomes.

But reality looks different. A large portion of assets lack telemetry. The rest generate data, but across disconnected systems with inconsistent formats.

At this point, the organization faces a choice: push forward with a compromised model, or step back.

The more effective path is to pause. Instead of forcing a predictive solution, the organization shifts to a Horizon 1 initiative to improve technician productivity through better knowledge access, while fixing the underlying data challenges.

The lesson: high-value ideas are not always the right starting point.

Conclusion: From Ideas to Readiness

AI does not fail because of a lack of imagination. It fails because of a lack of decision discipline.

The organizations that succeed in aftersales are not the ones running the most pilots. They are the ones that treat AI as a sequence, prioritizing based on readiness, building the right foundation, and resisting the urge to do everything at once.

Ready to decide what to do first? Start with the free AI Readiness Assessment

The challenge is not knowing what AI can do. It’s deciding what it should do first, and having the discipline to wait on the rest.