Picture a leadership team walking out of a strategy offsite. The slides were sharp. The use cases were compelling. The head of digital has a roadmap with phased milestones, and the board signed off on the investment. Everyone in the room felt the momentum.

Three months later, the pilot has stalled. Data isn’t in the shape the model needs. The process the AI was meant to augment turns out to have three undocumented variants across regions. The integration that looked straightforward on the architecture slide requires six months of middleware work nobody scoped.

What if the roadmap was never the problem?

The Comfort of Having a Roadmap

Organisations don’t invest in AI roadmaps to fail. They invest in them to feel certain.

There is something deeply reassuring about a well-structured roadmap. It signals that the organization has thought this through, the leadership is aligned, use cases have been identified, and that there is a plan. And in most organizations pursuing AI today, these three things genuinely exist. There is a roadmap. There are defined use cases. There is, at least nominally, leadership buy-in.

The problem is not that these things are false. The problem is that they are visible and visible signals of progress are easy to mistake for actual capability.

The easiest things to agree on are rarely the things that determine success.

The Cost of Getting This Wrong Is Now Visible

Enterprise AI readiness is now a board-level priority where investment is tracked and ROI is expected.

And when initiatives fail, the cost isn’t just financial, it reduces confidence in the next initiative, stalls momentum, and makes future funding harder to secure.

A team that spent 18 months on a capability only to hit execution blockers doesn’t just lose the initiative. They lose credibility and sometimes the mandate to try again.

AI failure is no longer a pilot issue, it’s a portfolio risk.

Why “We’re Ready” Is So Easy to Believe

Strategy, vision, and use cases require agreement, not capability. When a leadership team sits in a room and reaches consensus on where AI should take the business, that consensus is real and it matters. But it is a social achievement, not an operational one. It tells you that people are pointed in the same direction. It tells you very little about whether the organization can execute in that direction.

Use cases reinforce this. Identifying a compelling use case such as predictive maintenance, intelligent field dispatch, automated warranty triage, is intellectually satisfying work.

It creates energy and produces artefacts: slides, business cases, vendor shortlists. These artefacts look like progress. They’re often mistaken for proof that execution is within reach.

| Visible & Easy to Align On | Hidden & Hard to Measure |

|---|---|

| Vision & Strategy | Data Quality & Availability |

| Use Case Definition | Process Maturity |

| Leadership Alignment | System Integration |

| Roadmap & Timeline | Execution Capability |

What Actually Determines Whether AI Works

When AI initiatives stall, and many do, the post-mortem almost never points to the idea. The idea was usually fine. What it points to is the infrastructure underneath the idea: the data that wasn’t ready, the process that didn’t hold, the integration that couldn’t be delivered in time.

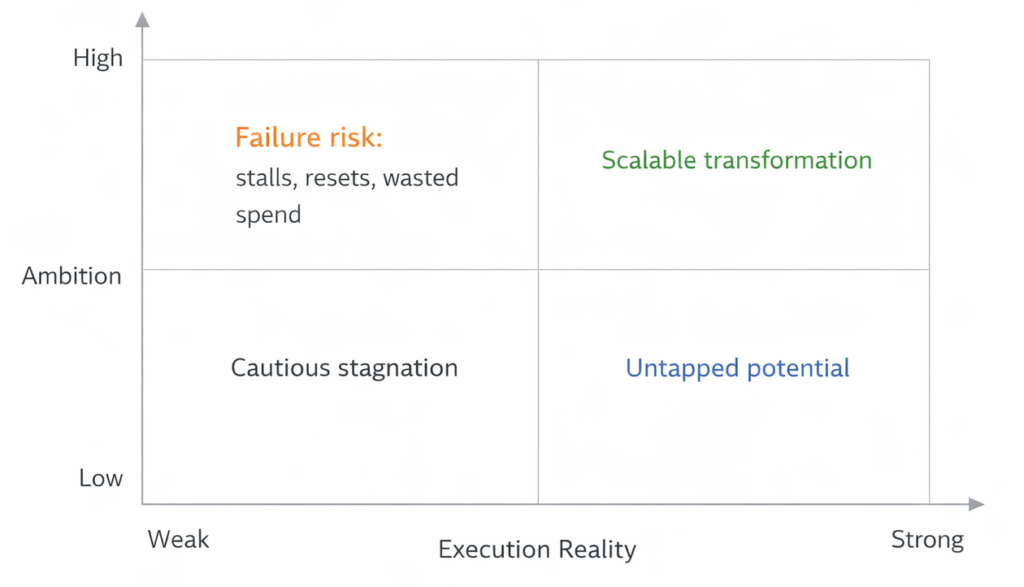

When Ambition Quietly Outruns Reality

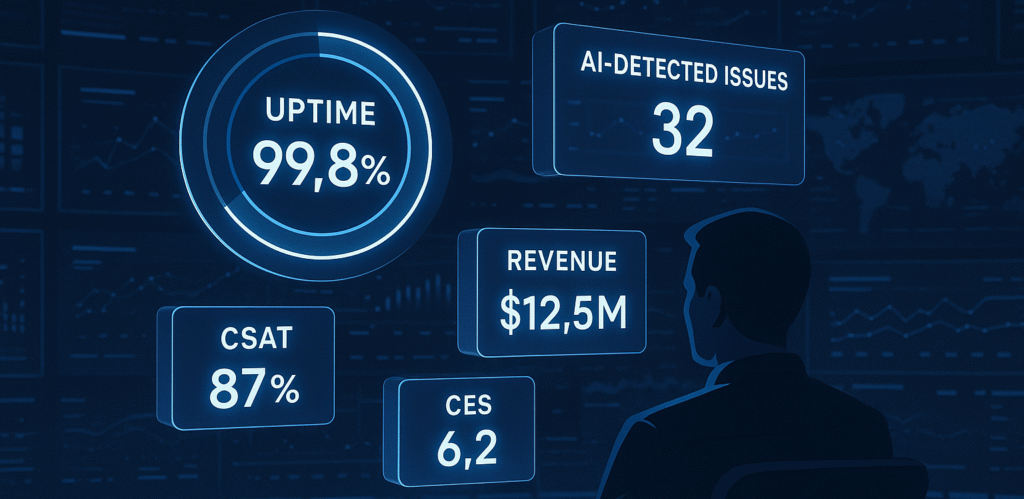

Here is the pattern. Strategy looks strong because it’s visible and agreed upon. Execution gaps stay hidden because no one built a structured way to measure them before the work began. Early pilots create false confidence, a proof-of-concept in a controlled environment doesn’t reveal whether the foundations will hold at scale.

What those foundations actually consist of are data quality and accessibility, process standardization, system integration, execution capability, governance. These are the dimensions most roadmaps assume are covered but rarely verify. If one is weak, progress slows. If several are weak, nothing scales.

Most organisations don’t fail because of bad strategy. They fail because ambition quietly outpaces execution capability, and no one measured the gap before it mattered.

Why the Best Teams Move Faster by Slowing Down First

There is a deeply ingrained instinct to equate speed with starting. Move fast. Build momentum. In AI transformation, this instinct is often what makes execution harder than it needed to be.

Teams that check AI transformation readiness first sequence better. They hit data problems mid-sprint, discover process complexity after they’ve built around it, face integration blockers they didn’t scope. Each reset costs time, money, and internal credibility. The speed gained at the start gets consumed, with interest, by the friction encountered later.

Teams that check readiness first sequence better. They identify the highest-risk dependency before it becomes a blocker. They scope more accurately, start narrower, and scale with fewer resets.

Readiness doesn’t slow transformation down. Unexamined gaps do.

Speed doesn’t come from doing more. It comes from doing the right things in the right order.

Want to know where your roadmap is exposed before execution reveals it?

The AI Readiness Assessment maps your organization across 7 dimensions and shows you exactly where to focus first.

The Question to Ask Before Your Next Initiative

Most AI planning conversations start with the same question: What should we build next?

It’s the wrong starting point, not because use case selection doesn’t matter, but because selecting a use case before verifying whether the foundations exist to deliver it is like choosing a destination before checking whether the vehicle will make the journey.

The question high-performing teams ask first is a different one: Are we actually ready to make this work?

If your roadmap assumes readiness, but you haven’t measured it, you’re operating on assumption, not strategy.

Most organizations don’t have a structured way to answer that. Gaps don’t announce themselves during planning, they reveal themselves during execution, which is precisely the worst time to discover them. A structured AI readiness framework maps the dimensions that roadmaps consistently assume but rarely check data and process maturity, integration capability, execution readiness, and governance. It makes the hidden side of the picture visible, before the work begins, not after it stalls.

If you’re unsure how to assess AI readiness in your organisation, the assessment takes 10–12 minutes and gives you a scored view across all dimensions.

The AI Readiness Assessment is free

It takes 10–12 minutes and gives you a scored view across all 7 dimensions, including your weakest link. No jargon. No sales call required.